7 Ways to Improve Crawling and Indexability

To Improve Your Google Presence...

Google must sort through billions of online documents to produce search results in a couple of milliseconds despite the fact that there are over 1 billion websites on the internet. To accomplish this, it crawls and indexes websites in order to compile a massive database of the most important information available.

Contrary to popular belief, Google doesn't always check your website to see if there's anything fresh and interesting. If you want to appear in Google search results, you must make your site crawlable and indexable.

Here are 7 steps to help you increase your Google presence:

Set up an XML sitemap

This is a site map you make to tell search engine crawlers what pages are on your website, how they're laid out, and which ones to look at. These are only readable by machines. Although they aren't meant for your public to see, they are necessary for crawling and indexing your website.

Additionally, Google claims that they are particularly significant if:

- Since your website is new, there aren't many outside links pointing at it.

- There are many pages on your site that need to be indexed (eg.: e-commerce product pages)

- Your website lacks a robust internal linking structure.

While some websites created from scratch automatically produce them thanks to content management systems, others might not. If you're unsure if you have one or not, check with your tech team to make sure it exists and has been registered with Google Webmaster Tools.

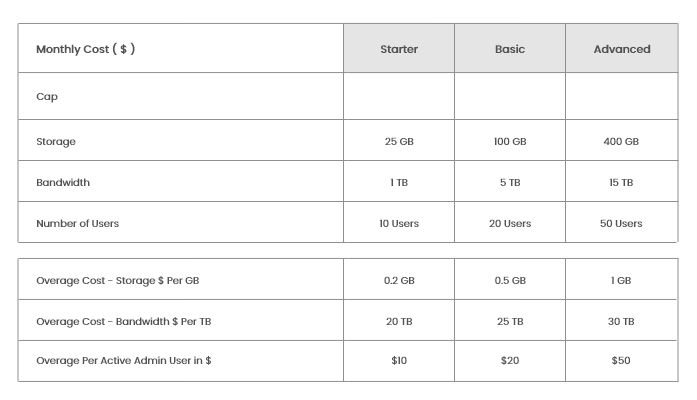

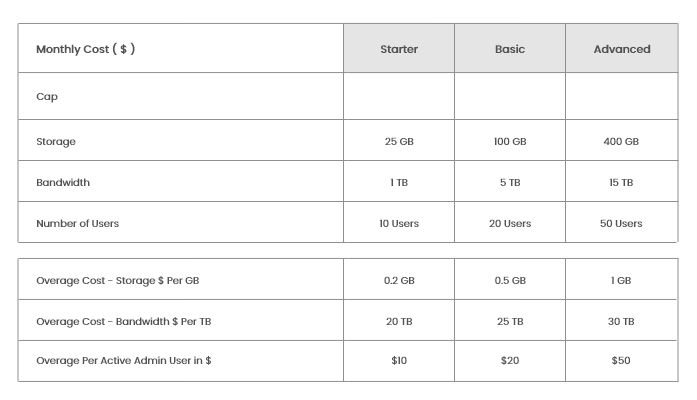

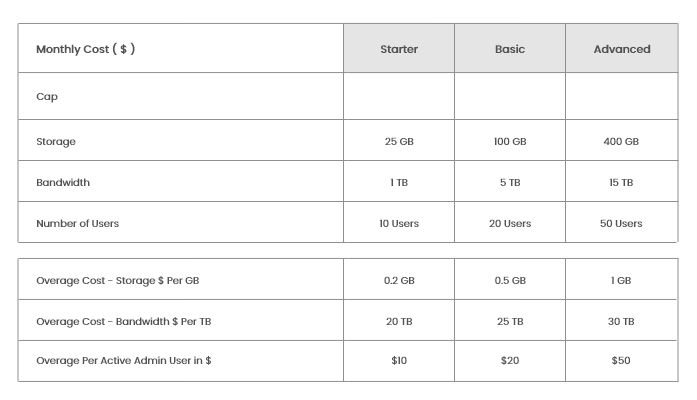

Hocalwire produces reports that help with planning for advertising and sales initiatives. To improve SEO rankings and direct organic traffic, Hocalwire CMS features SEO Keywords, Meta Data, ALT Text, and Sitemap structures built in. With the help of XML sitemaps, Hocalwire CMS can arrange and make searchable your unorganized, disorganized website.

Reduce 404 errors

The crawl budget allotted to Google bots limits the amount of time they can spend on each website before they must move on to other web pages. Your crawl budget can be wasted if your site frequently returns 404 errors.

When a user or search engine bot tries to access a page that is no longer available, such as:

- A product landing page for something you don't sell anymore

- A deal that has already expired

- A thing that you removed

- A link you modified

These 404 pages should be kept to a minimum. Your crawl budget will be used on pages that don't actually provide any value if search crawlers encounter a dead end and can't simply navigate any farther.

Redirects that guide visitors to pages with pertinent information can help you avoid them.

However, just in case you create 404 pages, give them a unique look and feel with instructions for the user on what to do next.

Use Robot.txt to check for crawling issues.

Use Robot.txt judiciously if you want to increase your search visibility. Google's search crawlers may read notes in robot.txt files that instruct them whether or not to look at a certain page, folder, or directory.

How come you would use these?

- To prevent duplicate content problems in the event of a reroute

- To protect consumers' private information

- If your website is already live but you're not ready for Google to see it, you can prevent indexing

Robot.txt instructs search crawlers not to access specific pages, allowing them to continue to the pages that should be indexed by Google.

Not all websites require robot.txt files, and not all websites have them. Only utilize robot.txt when necessary. Incorrectly annotated pages might lead to crawl issues, which can prevent your site from being indexed and from ranking in search results. Google Webmaster Tools allows you to check for crawl issues on your website.

Make a plan for internal connectivity

The crawlers at Google follow links. Additional content is cached and added to Google's index as they discover more links to it. They either stop crawling or turn around to find new paths when they come to a dead end.

Making your site simple to crawl using an internal linking strategy and deep links is one of the simplest ways to ensure that it gets indexed.

Deep links are beneficial for both crawlability and user experience. You can use these hyperlinks to direct users and web crawlers to relevant pages on your website.

To a blog post about Facebook, for instance, you might add a deep link on the phrase "social media marketing" that directs readers to a landing page outlining your social media marketing offerings. You might also include a link to a relevant article, case study, or client endorsement.

The objective is to make sure the most important pages on your website are simple to find and can be indexed in just a few clicks.

Create text versions of visual materials

Although visual material is excellent for user experience, search crawlers do not enjoy it as much. Google bots are able to recognize the many file formats you have on your websites, such as mp4s, JPGs, GIFs, and PNGs, but they are unable to crawl the content to find out what it is about. As a result, it's possible that you have useful resources with high keyword and SEO value but are being overlooked.

Publish written transcripts to go along with multimedia files to fix this. The text can be crawled by Google bots, which will then index all of the links and keywords it contains. (It's also a smart idea to send Google a unique XML video sitemap for indexing.)

A dynamic process is search engine optimization. To move up in Google's search results and reach your target audience, you must keep up with the most recent developments and modify your strategy.

If possible, keep duplicate pages out of your document

Duplicate content refers to when your writing is a copy of writing that may be accessed elsewhere. Changing a few words won't always work because duplicate content doesn't have to be an identical replica to be considered such. Google may punish your website for having duplicate material by drastically reducing its traffic.

Use the correct canonical tags if it's required to create duplicate pages.

Examine if you really need each filter, and label the pages that are producing duplicate pages.

Take away anything that is not required.

Make sure there are no orphan pages on your website

Orphan pages are those that aren't connected anywhere else on your website, making it nearly impossible for visitors to find them. These are pages that Google won't even be aware of, so they won't really serve any purpose. Therefore, if you have any of them, be sure to fix this problem by incorporating them into your website's navigation structure or by creating internal links from other sites!

Hocalwire CMS handles the technical parts of keeping Large Sitemap, Indexing pages for Google, Optimizing page load times, Maintaining assets and file systems, and Warning for broken links and pages while you handle all these non-technical components of SEO for Enterprise sites. If you're searching for an enterprise-grade content management system, these are significant value adds. To learn more, Get a Free Demo of Hocalwire CMS.